Introduction

Sometimes when developing on low-level stuff like a kernel, you face situations which completely freeze your system. While it's not that much of a problem on a development board, it's more annoying when it happens on a regular PC and you have to move off your chair to power cycle the beast, or worse, wait till you're home when developing remotely.

Usually this doesn't happen because your hardware is supposed to come with a hardware watchdog timer that will reboot the board if hung. But some boards don't have one, or sometimes it doesn't work well or you simply don't have the driver on your experimental kernel.

This article explains how it is possible to implement a watchdog for an ATX power supply, that is controlled over USB. It supports both being controlled by the target device itself (like any regular watchdog timer), or by another machine which will be able to power cycle the tested one.

Background

First, it seems useful to remind how a watchdog timer works. It is a hardware counter that counts down from a configured value, and which triggers the system's reset once the counter reaches zero. The main system periodically refreshes this counter so that as long as the system runs, the counter cannot reach zero (e.g. it sets it to 10 seconds every second). If the system hangs, it stops refreshing the timer and once the period is over, the reset line is triggered and the system reboots.

Second, it happens that some embedded boards do not easily expose certain connectors like the reset pin. At this point it becomes a bit harder to implement a hardware watchdog timer. But there is a solution : ATX power supplies are expected to drive the

PWR_OK signal up once the voltage is correct, and drive it down when it's out of specs. More precisely, they connect it to a pull-up resistor, and actively drive it down when the voltage is not within specs. The motherboard is supposed to watch this signal and hold its reset line until this signal is up. This is very convenient because it means we can connect to this signal without even cutting any wire, and drive it low to force the board to reset itself.

So we have our reset input :-)

Locating the PWR_OK signal

This signal is on the grey wire connected to ping 8 of the ATX connector (either 20 or 24 pin) as shown below. In case of doubt, please use your favorite search engine to look for ATX pinout. But be careful, the pinout is often shown from the pin side (front view) and not from the wire side (rear view) :

Controlling the signal

We will need a timer and one solution to communicate with the host and/or with another host. Initially I thought about using a serial port but they are not always available nowadays. Then I thought about using the PS/2 connector and periodically sending commands via the keyboard controller, but some boards do not have such connector. Nowadays almost all boards do have plenty of USB ports however. So it looks like implementing a USB slave device is a good idea, which will make it easy to unplug and connect to another machine if desired.

The difficulty with USB is to find a suitable chip. But not only this is no longer a problem thanks to

the V-USB stack but it ends up possibly be the cheapest solution one can think of. This stack is available for very affordable micro-controllers like Atmel (now Microchip)'s

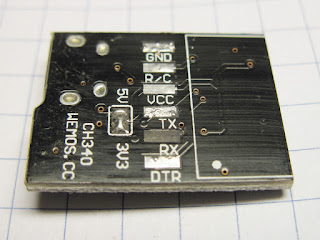

ATTiny85. which can be purchased already soldered on a board with all required external components for around $1.50 to $2.00 depending on the board and form factor. Models sold as "Digistump Digispark", "Adafruit Trinket" as well as various so called "ATTINY85 development/programmer board" equipped with a micro-USB connector are all suitable. Two such devices have been successfully used to date :

One point to keep in mind is that the

PWR_OK pin can be very sensitive on the motherboard. The ATX specification says that a power supply is required to drain at least 200 µA (i.e. 5V through a 25k resistor), and a motherboard must consider any voltage lower than 2.4V as low. This means that simply connecting a 10k resistor between this signal and the ground may be enough to drive it low with some power supplies. As long as the module is always connected, it's not a problem. But if the module is to be left disconnected, hanging out of the machine, then it will drain the signal through the power lines and will drive it low, preventing the machine from powering up. One pin on the ATTiny85 doesn't cause this :

PB5. It's shared with the

RESET signal and supports a high voltage, so it doesn't contain any pull-up nor clamp diode. The tests confirmed that indeed, when the module is not connected, only this pin doesn't prevent the board from powering up.

There are 3 solutions to this problem :

- use the PB5 pin as the output to control PWR_OK. I'm not happy with doing this as it won't be possible to reprogram the device anymore without providing a high voltage to the chip.

- permanently connect the +5V to the device by connecting it to the power supply's +5V line. I'm not fond of this method either, as taking the +5V outside of a machine isn't always safe if there aren't efficient protections (e.g. wires getting accidently pulled off could easily burn if shorted). On some boards this may also require disconnecting the +5V from the micro-USB connector to avoid leaking the current into the USB host.

- adding a transistor to only let the current flow when the board is powered. This sounds like the best solution, an only requires one external component.

The boards above all include a LED connected to

PB1. We'll use it to indicate when the

PWR_OK signal is asserted by the module and to help debugging. It's always convenient to be able to test a driver without having to power down the machine to confirm it works! On such boards,

PB3 and

PB4 are used for the USB connection.

PB5 is shared with the

RESET, which only leaves

PB0 and

PB2 available. Thus we'll use

PB0 here to control the

PWR_OK signal.

Additionally, in order to protect the device against cheap power supplies which would directly connect

PWR_OK to the +5V output, we'll put a series resistor to protect the transistor and the micro-controller against a short-circuit. The micro-controller is supposed to be able to drain and sink 40 mA per output pin. By using 180 ohm, we'll limit the output current to 27 mA in the worst case (just enough to lit a LED), which allows to safely control a wide range of power supplies.

Circuit design

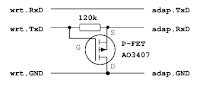

The circuit is pretty simple. A

BS170 MOSFET is connected between +5V and

PB0, so that the output is only connected when

PB0 is low. This MOSFET contains a reverse diode, which allows us to also monitor the output and report it to the USB host. The MOSFET may be replaced with an NPN transistor like a 2N3904 or a BC547, a resistor, and a diode if the voltage monitoring function is desired. Both solutions have been built, tested, and confirmed to work :

The code takes care of disabling the output and the pull-up when the output is not asserted, so that no current even flows through the diode. The finalized boards look like this (before putting them into transparent heat shrink tube) :

Communicating with the chip

The V-USB mentionned above provides USB connectivity. This is fine for HID devices, but these devices require a driver on the host (well, it's not totally exact as they can send keyboard keystrokes but there's no trivial way to send data without a driver or an application). Another solution consists in emulating a modem using the CDC ACM mode. This is exactly what the

AVR-CDC code does. It's important however to keep in mind that CDC ACM over a low-speed device is out of spec but general operating systems tend to support it, which is what we're exploiting here.

The AVR-CDC code comes with an example application consisting in a USB-to-RS232 interface realized entirely in software. It's a good starting point for what we need, so the RS232 code was removed and the USB code was modified to instead simply manage a watchdog. The modified code is

available here with the pre-compiled firmware in hex format.

The protocol is very simple : the device presents itself as a serial port on the host, and receives some short commands. The following commands are supported :

- '0' : disables the watchdog

- '1'..'8' : schedules a reset after 2^N-1 seconds (1..255)

- 'OFF' : power off

- 'ON' : power on

- 'RST' : triggers a reset immediately

- 'L0'/'L1' : turns the LED off/on (to test communication without resetting)

- '?' : retrieves current state on 2 bytes. First byte indicates the level of the pin, which is set as an input when not off, allowing to check a power supply's status. '0' indicates it's off (or forced off), '1' indicates it's on (or disconnected). The second byte indicates the remaining amount of seconds before reset.

All unknown characters reset the parser, so

CR,

LF, spaces etc will not cause any trouble.

All commands which manipulate the timer or the output (

'0'..

'8',

'ON',

'OFF',

'RST') automatically disable any pending timer. The device takes a great care not to influence the output during boot, and doesn't automatically start. This way it's possible to leave it connected inside a machine and to turn it on by software. It may also be used as a self-reset function by writing

'RST' to the device.

Testing the module

The tests were conducted on an ATX motherboard with an ATX power supply. The module was connected to a netbook PC. The module was forced off and on, then it was verified that the device didn't reset as long as the timer kept being refreshed, and then that stopping the loop (Ctrl-C) was enough to cause the board to restart. The status and the timer were also read before, during and after a reset :

The whole test setup :

The motherboard tested here (Asus P5E3 WS Pro) seems to only sample the

PWR_OK signal on the falling edge for a short time, and decides to start anyway after a few seconds. It will prevent from controlling the power from outside. It's not dramatic, it still allows to remotely reset the board, which is the most important.

It is likely that some users may want to enable the timer at boot time. It's easy to do in the code anyway. A change could consist in checking pin

PB2 to decide whether or not to automatically start the timer at boot. A variation could consist in replacing the output resistor with a relay connected to +5V or +12V, inserted between the mains and the power supply, or between an external power block's output and the board to control. The system is simple and versatile enough for many variations, possibly not even requiring any code update.

For motherboards featuring an internal USB connector, the Digispark board may be the best, as it includes the USB male connector, so it can directly be plugged into the internal connector without any cable. For internal connections, only the

PWR_OK wire is needed.

For remote control, it's preferable to place the module outside of the machine with only the two long wires connected to the ATX power connector. These ones do not represent any particular risk. In case of shortage or connection to the ground, the board will simply be forced off.

Some tests should be conducted to determine whether or not it would make more sense to drive the

PS_ON# signal instead. This one is driven low by the board to turn the power supply on. The problem is that some boards wire it to the ground to keep it constantly low. However it would be possible to implement the inverted signal on pin

PB2 and decide depending on the motherboard whether to use

PWR_OK or

PS_ON#.

Downloads and links